Aerospace Cognitive Engineering (ACE) Laboratory

Lab Overview

The mission of the Aerospace Cognitive Engineering (ACE) Lab is to develop and demonstrate methods that improve the design and evaluation of complex, safety-critical automation systems. The group follows in the tradition of cognitive engineering and cognitive systems engineering to apply principles from cognitive science, human factors engineering/ergonomics, human-systems integration, human-systems engineering and systems engineering to complex, safety-critical systems.

ACE Lab methods and tools focus on helping to clearly define the tasks and associated performance metrics for the automation functions and predict interaction performance based on models of human and system performance. Research conducted by the ACE Lab examines human-automation interaction in safety-critical systems in both aeronautics and space domains and the potential for failures in these interactions. Failures in complex, safety-critical systems are often a result of unexpected interactions between the task, the environment, system components and human operators. Design and evaluation of complex automated systems require special formalisms to understand and communicate the environment, system performance and potential problems.

ACE Lab members have primarily applied their expertise and methods to automation on different categories and classes of aircraft but have also supported spacecraft mission applications. A primary goal for this lab has been to help improve safety in the ever-increasing complexity of modern cockpits, and the ever-changing nature of aviation technology.

Research Areas/Projects

Assessing System Performance

Traditional human-automation interaction analysis techniques rely on human subject experimentation, simulation, and qualitative observation. While these can provide meaningful insights into the system being evaluated, they are all limited in the number of possible combinations of system conditions and human-automation interactions that might result in failures.

Tools for Assessment

Formal methods offer such analysis capabilities: where a variety of automated or semi-automated tools can be used to mathematically prove whether a model of a system will adhere to desirable system properties. We are exploring how these formal methods can be used to complement other human-automation interaction analyses and thus assist in the design, analysis, verification, validation, and certification of complex systems.

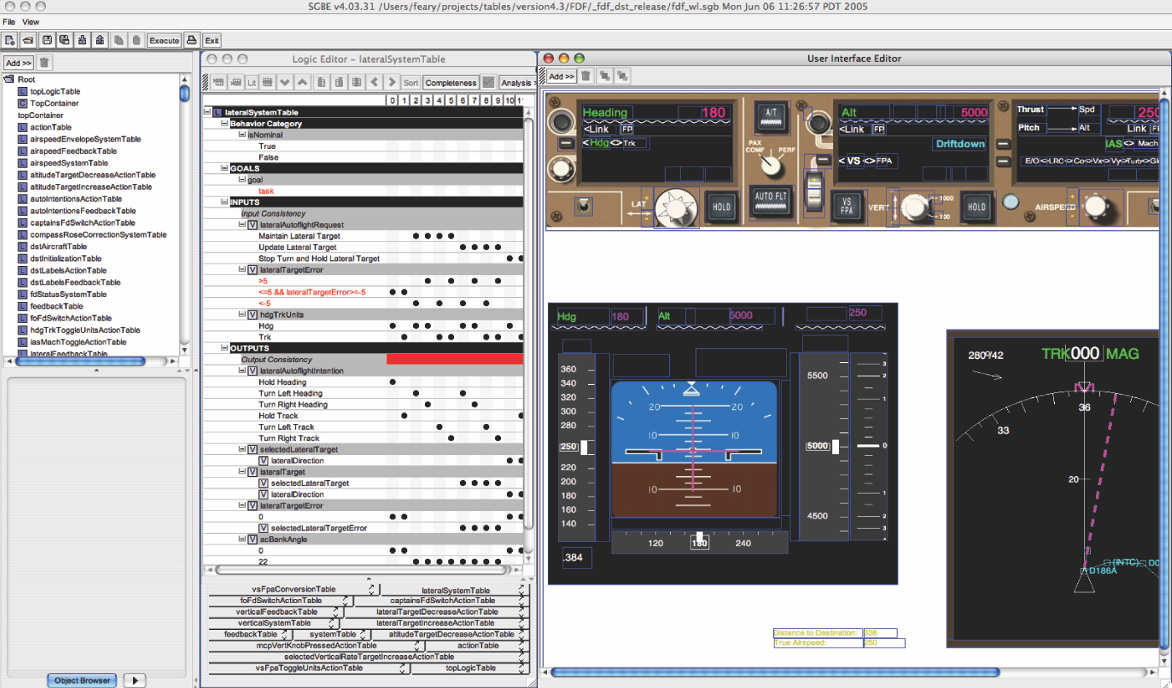

An example of a tool developed by the ACE Lab is the Automation Design and Evaluation Prototyping Environment (ADEPT). ADEPT is a tool that combines the specification of logic with formal analysis techniques (e.g. completeness, non-determinism), a graphical interface editor and automatic code generator to create part-task simulations. The simulations and logic generated by the tool are intended to be support the specification of requirements.

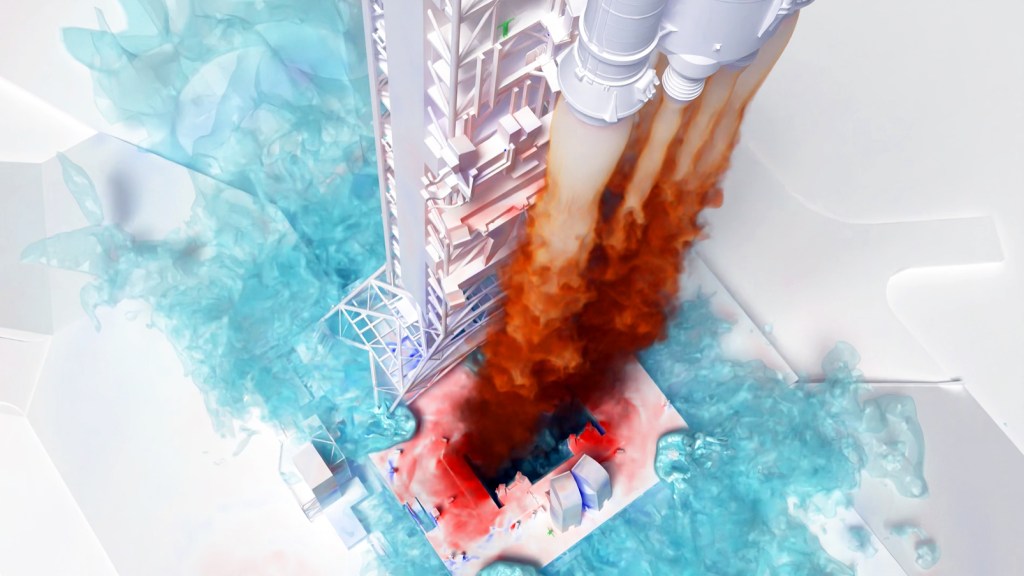

Assessment Through Simulation

ACEL-RATE (Aerospace Cognitive Engineering Lab Rapid Automation Test Simulator) is an adaptable fixed-base aircraft simulator focused on the investigation of the performance and interaction of pilots and increasingly automated aircraft systems. The development of the ACEL-RATE simulation capability provides ACE Lab scientists with a highly reconfigurable suite of hardware components and software tools that support scientific research and development to support various environments. The simulator utilizes NASA developed software, referred to as FlightDeckZ with a simple reconfigurable cockpit placed within a 10-foot spherical dome with a cluster of real-time image generators to display highly realistic scenery and environmental objects.

FlightDeckZ Vehicle Simulation Environment

FlightDeckZ is a modular aircraft research environment, consisting of three major software components:

- FlightZ

- DeckZ

- FMSZ

FlightZ implements the vehicle performance, guidance, control and autopilot functions. FlightZ can import aircraft models of different formats, and currently includes high fidelity models of small aircraft, fighter jet, helicopter, regional and large transport aircraft as well as eVTOL models developed as part of the NASA Revolutionary Vertical Lift Technologies (RVLT) project. Three aircraft performance models representing multi-copter, Lift Plus Cruise and Tilt-Wing configurations were used in our Automation Enabled Pilot (AEP) studies described below. The aircraft models are representative of a subset of Advanced Air Mobility (AAM) industry concepts used in the 6000 pound weight class. Neither of the aircraft tested included models of ground effect, atmospheric effects or critical azimuth.

DeckZ implements its own virtual cockpit controls and graphics displays for the FlightZ vehicle model. The DeckZ software generates these displays. These cockpit displays are available on the FDz host computer in addition to the eVTOL displays in the ACEL-RATE simulator.

FMSZ implements a Flight Management System (FMS) capability that provides navigation and guidance targets to the FlightZ aircraft models. FMSZ includes automatic takeoff and landing capabilities and is highly modifiable.

Advanced Air Mobility Investigations

The ACE Lab is supporting the Advanced Air Mobility (AAM) project, “a rapidly-emerging new sector of the aerospace industry that aims to safely and efficiently integrate highly automated aircraft into American airspace. AAM is not a single technology but rather a collection of new and emerging technologies being applied to aviation, particularly in new aircraft types. AAM is designed to deliver agile, affordable, and accessible flights to all Americans and drive infrastructure development, employment, and innovation.” (DOT, 2026).

To support AAM research on pilot-automation interaction, the ACE Lab started the Automation Enabled Pilot (AEP) study series. Initial AEP studies focused on assessment of piloted eVTOL aircraft with Indirect Flight Control Systems (IFCS). The use of IFCS require design decisions about desired behavior as the aircraft transitions from forward flight to low speed or hover. Some of these transitions occur regardless of aircraft configuration (e.g. airmass to ground-referenced flight, envelope protection) and others (e.g. the transition from wing-borne to thrust-borne flight) will vary with aircraft configurations (e.g. Tilt-rotor, Tilt-Wing, Lift Plus Cruise, etc.). Industry proposals span a wide range, but the majority include onboard pilots until the concept of operations mature to the point to allow remote piloting or autonomous operations. IFCS also enable aircraft applicants to deviate from conventional pilot station configurations (e.g., cyclic and collective inceptors, pedals). The AEP-2 study conducted in 2024 examined isolated operational maneuvers and performance criteria in a flight test setting. AEP-3 examined the efficacy of concept UAM operations from the perspective of pilots flying representative eVTOL aircraft in purpose-built scenarios.

The most recent AEP-3 investigation examined representative eVTOL aircraft and automation flying procedures designed to integrate into complex, high tempo airspace with diverse aircraft types in a mid-term operations airspace concept. The procedure concept was developed based on a previous high fidelity air traffic controller study to examine integrated Urban Air Mobility (UAM) operations in the Dallas-Love airport airspace (Verma et al., 2024) referred to as the Air Traffic Management Interoperability Simulation (AIS) study.

Publications

- Feary, M., Sherry, L., & Palmer, E. (2017). Developing training for a modern autopilot. Engineering Psychology and Cognitive Ergonomics: Volume Five-Aerospace and Transportation Systems, 233. (republished from 1999)

- Feary, M. Evaluation Methods for Human – Autonomy Teaming. Invited address to the “Will Air Transport be Fully Automated by 2050” Conference, June 1, 2016, Toulouse, FR.

- Feary, M. Evaluating Human – Autonomy Teaming: How do we ensure Resilience?, 34th Annual CHI Conference on Human Factors in Computing Systems Special Interest Group, (CHI 2016), May 7-12, San Jose, CA.

- Feary, M. and Stewart, M. (2016) Understanding Current Safety Issues for Trajectory Based Operations, Communications, Navigation and Surveillance (CNS) Task Force Meeting, Feb. 10, Phoenix, AZ.

- Feary, M. S., & Roth, E. (2014). Some Challenges in the Design of Human-Automation Interaction for Safety-Critical Systems. 5 th International Conference on Applied Human Factors and Ergonomics AHFE 2014; 19-23 Jul. 2014; Krakow; Poland

- Feary, M. (2014) Modeling Hurts: Some thoughts on the Applicability of Modeling for Human-Machine Interaction. AAAI Spring Symposium,

- Feary, M., Billman, D., Chen, X., Howes, A., Lewis, R., Sherry, L., Singh, S. (2013) Linking Context to Evaluation in the Design of Safety Critical Interfaces, Human-Computer Interaction. Human-Centered Design Approaches, Methods, Tools, and Environments Lecture Notes in Computer Science, Volume 8004, pp. 193-202

- Feary, M. (2012) Cognitive Systems Engineering: The Next 30 Years. Presentation as part of an invited panel at the 56thAnnual Meeting of the Human Factors and Ergonomics Society, October 22-26, Boston, MA

- Feary, M. (2012) Evaluating Models of Human Performance: Safety-Critical Applications. Presentation as part of an invited panel at the 56th Annual Meeting of the Human Factors and Ergonomics Society, October 22-26, Boston, MA

- Pritchett , A. and Feary, M. (2011) Designing Human – Automation Interaction, Chapter 12 in the Handbook for Human Machine Interaction, Guy Boy (Ed.) Ashgate Publishing Unlimited, Surrey, UK.

- Feary, M. (2011) Engineering Automation in Interactive Critical Systems. 30th ACM Conference on Human Factors in Computing Systems (CHI2011), May 7-12, Vancouver, CA.

- Feary, M. (2011) Task Specification Language. Department of Defense Human Factors Engineering Technical Advisory Group, October 24-27, Tysons Corner, VA.

- Feary, M. (2010) Bridging the gap between Human – Automation Modeling and Flight Deck Design, A panel at the 54th Human Factors and Ergonomics Society Annual Meeting (HFES 2010), September 28- Oct.1, San Francisco, CA.

- Feary, M. (2010) Predictive Risk Evaluation of Human – Automation Interaction for New Technologies. A panel at the 2010 Human – Computer Interaction in Aeronautics conference (HCI-Aero 2010). November 3-5, Orlando, FL.

- Feary, M. (2008) Development and Evaluation of the Automation Evaluation Design and Prototyping Toolset (ADEPT), 52ndAnnual Meeting of the Human Factors and Ergonomics Society, September 22-26, New York City, NY

- Feary. M (2009) A Toolset for Supporting Iterative Human – Automation Interaction in Design, In Proceedings of MODSIM ‘09, Virginia Beach, VA (Oct. 14-16).

- Feary, M. (2007). Automatic Detection of Interaction Vulnerabilities in an Executable Specification, In Engineering Psychology and Cognitive Ergonomics, Vol. 4562, pp. 487-496, Springer Berlin, Heidelberg, ISBN 978-3-540-73330-0

- Feary, M. (2006). Formal Identification of Automation Surprise Vulnerabilities in Design. PhD Thesis (In publication), Cranfield University, Bedfordshire, UK.

- Feary, M. and Sherry, L. (2004) Dynamic Storyboards: Faster, Cheaper Software Prototypes for Usability Evaluation. In Proceedings of Human Computer Interaction in Aviation 2004, Toulouse, FR.

- Feary, M., Sherry, L., Polson, P., and Fennel, K. (2003). Incorporating Cognitive Usability into Software Design Processes. In Harris, D, Duffy, V., Smith, M., and Stephandis, C.(Eds.) Human-Centered Computing: Cognitive, Social, and Ergonomic Aspects. Volume 3(pp.427-431) Mahwah, NJ: Lawrence Erlbaum. ISBN 0-8058-4932-7

- Feary, M., Sherry, L., Polson, P., and Palmer, E. (2001). A Formal Method for Integrated System Design. 8th European Conference on Cognitive Science Approaches to Process Control (CSAPC ’01), Munich, Germany (September 24-26).

- Feary, M., Sherry, L., Polson, P., and Palmer, E.(2000). Evaluation Of A Formal Methodology For Developing Aircraft Vertical Flight Guidance Training Material . In proceedings of the International Conference on Human-Computer Interaction in Aeronautics, Toulouse, France (September 27-29).

- Feary, M., Sherry, L., Polson, P., and Palmer, E.(2000). Formal Method for Developing Part-Task Training for a Modern Autopilot. In proceedings of the 3rd International Conference on Engineering Psychology and Cognitive Ergonomics, Edinburgh, Scotland (October 25-27).

- Feary, M., McCrobie, D., Alkin, M., Sherry, L., Polson, P., Palmer, E. (1999). Aiding Vertical Guidance Understanding. Air and Space Europe, Volume 1, Issue 1.(pp.38-41) Elsevier.

- Feary, M., and Barshi, I. (1999) “Why is it Doing That?” Perspectives of the FMS. Paper presented at the Tenth International Symposium on Aviation Psychology, Columbus, OH (May 3 -May 7).

- Feary, M., McCrobie, D., Alkin, M., Sherry, L., Polson, P., Palmer, E., and McQuinn, N. (1998). Aiding Vertical Guidance Understanding. Technical Memorandum No. NASA/TM-1998-112217. NASA Ames Research Center.

- Feary, M., McCrobie, D., Alkin, M., Sherry, L., Polson, P., Palmer, E., and McQuinn, N. (1998). Aiding Vertical Guidance Understanding. In Proceedings of the International Conference on Human-Computer Interaction in Aeronautics, Montreal, Quebec, Canada (May 27-29).

- Feary, M. and Sherry, L. (1998) Evaluation of a Formal Methodology for Developing Aircraft Vertical Flight Guidance Training Material. In Proceedings of the 42nd Annual Meeting of Human Factors and Ergonomics Society, Chicago, IL (October 5-9).

- Feary, M. and Sherry, L. (1998) Evaluating Flight Crew Operator Manual Documentation. In proceedings of IEEE International Conference on Systems, Man and Cybernetics, San Diego, CA (October 11-14).

- Feary, M., Palmer, E., Sherry, L., Polson, P., Alkin, M., and McCrobie, D.(1997) Behavior-Based vs. System-Based Training and Displays for Automated Vertical Guidance. In Proceedings of the Ninth International Symposium on Aviation Psychology, Columbus, OH (April 27-May 1).

- Feary, M. (1997) Control-Based vs. Guidance-Based Training and Displays for Automated Vertical Flight Guidance. Masters Thesis, San Jose State University, San Jose, CA.

- Feary, M., Nowinski, J. and Statler, I. (2012) NASA – easyJet Airline

Collaboration on The Study of Fatigue Risk Management. Presentations October 1,2 at Easyjet HQ, Luton Airport, England, UK, and October 3 at Easyjet, Gatwick Airport, England, UK.

- Feary, M. (2012). System-Wide Safety Assurance: Human Systems Solutions. Presentation to the FAA-Eurocontrol Action Plan 15 for Safety. May 7- 9, Washington, DC.

- Billman, D. and Feary, M. (2012) Needs Analysis in Safety Critical Domains: TM in progress

- Pizziol, S., Tessier, C., Feary, M., & Dehais, F. (2015, September). Action reversibility in human-machine systems. In Proceedings of the 5th International Conference on Application and Theory of Automation in Command and Control Systems (pp. 21-31). ACM.

- Marquez, J. J., Feary, M., Rochlis Zumbado, J., & Billman, D. (2013). Evidence report: Risk of inadequate design of human and automation/robotic integration (NASA Technical Report). Houston, TX: Lyndon B. Johnson Space Center.

- Pizziol, S., Feary, M., Dehais, F. and Tessier, (2012) C. One Action Reversibility and Total Unrecoverability analysis for ADEPT. In Proceedings of the Human Factors and Ergonomics Society Europe Chapter Annual Meeting in Toulouse, France, October 2012

- Combefis, S., Giannakopoulou, D. Pecheur, D. and Feary, M. (2011) A Formal Framework for Design Analysis of Human-Machine Interaction, IEEE-SMC 2011, October 9-12, Anchorage, AK.

- Billman, D., Arsintescu, L., Feary, M., Lee, J., Smith, A., and Tiwary, R. (2011). Benefits of Matching Domain Structure for Planning Software: The Right Stuff. . 30th ACM Conference on Human Factors in Computing Systems (CHI2011), May 7-12, Vancouver, CA.

- Feary, M. (2011) Human Systems Research in NASA’s Aviation Safety Program. Department of Defense Human Factors Engineering Technical Advisory Group, May 2- 5, Natick, MA.

- Bolton, M., Bass, E., Siminceanu, R. and Feary, M. (in progress) Applications of Formal Methods in Human Factors: Current Practices and Future Developments, Submitted to the Human Factors journal.

- Billman D., Feary, M., and Schreckenghost, D. (2010) Needs Analysis: The Case of Flexible Constraints and Mutable Boundaries, 28th ACM Conference on Human Factors in Computing Systems (CHI2010), April 10-15, Atlanta, GA.

- Billman, D. and Feary, M. (2010). Collective Intelligence and Three Aspects of Planning: A NASA Example, The 2010 Conference on Computer Supported Collaborative Work, Feb. 6-10, Savannah, GA. (paper accepted, poster in press)

- Sherry, L., Feary, M, Fennell, K. and Polson, P. (2009) Estimating the Benefits of Human Factors Engineering in NextGen Development: Towards a Formal Definition of Pilot Proficiency, In Proceedings of the AIAA Ninth Aviation, Technology, Integration and Operations Conference (ATIO), AIAA-2009-6974, Hilton Head, SC (Sept. 21-23).

- Sherry, L., Feary, M, Lard, J., and Fennell, K. (2009) Methodology for Estimation of Human-Computer Interaction Engineering in NextGen/SESAR Development. Eighth USA/Europe Air Traffic Management Research and Development Seminar (ATM2009), Paper #28, Napa, CA.

- Sherry, L., Feary, M. and Medina, M. (2009) Standards for Human-Computer Interaction (HCI) for NextGen High-Density Operations. 2009 ICNS conference, Arlington, VA.

- Sherry, L., Medina, M., Feary, M, and Otiker, J. (2008) Automated Tool for Task Analysis of NextGen Automation. In Proceedings of the Eighth Integrated Communications, Navigation and Surveillance (ICNS) Conference, pp. 1-9, Bethesda, MD, DOI 10.1109/ICNSURV.2008.4559185.

- Medina, M., Sherry, L., and Feary, M. (2008) Automation for Task Analysis of Next Generation Air Traffic Management Systems. In Proceedings International Conference in Air Transportation, Paper 52, Fairfax, VA. (ICRAT Best Paper award)

- Fennell, K., Sherry, L., Roberts, R. J., and Feary, M. (2006) Difficult Access – The Impact of Recall Steps on Flight Management Computer Errors, International Journal of Aviation Psychology, Vol. 16, 2, pp. 175-196.

- Sherry, L., Fennel, K., Feary, M., and Polson, P. (2006) Analysis of ease-of-use and ease-of-learning of a modern flight management system. AIAA, Journal of Aerospace Computing, Information, and Communication, 3, pp.177-186.

- Sherry, L., Fennell, K., Feary, M. and Polson, P. (2006) Human-Computer Interaction Analysis of Flight management System Messages, AIAA Journal of Aircraft, Vol. 43, 5, pp.1372-1376.

- Sherry, L., Fennell, K., Feary, M., Polson, P. (2005) A Human-Computer Interaction Analysis of Flight Management System Messages, NASA/TM—2005-213459, Ames Research Center, Moffett Field, California

- Sherry, L. and Feary, M. (2004) Task Design and Verification Testing for Certification of Avionics Equipment. The 23rdDigital Avionics Systems Conference, 2004 (DASC 04), Vol 2., pp. 10.A.3-101-110, 24-28 Oct., Salt Lake City, UT. (Best paper in track award)

- Sherry, L., Feary, M, Polson, P., and Fennell, K. (2003) Drinking from the Fire Hose: Why the Flight management System Can be Hard to Train and Difficult to Use, NASA/TM -2003-212274, Ames Research Center, Moffett Field, California.

- Sherry, L., Polson, P. and Feary, M. (2002) Designing User-Interfaces for the Cockpit: Five Common Design Errors, and How to Avoid Them. In Proceedings 2002 SAE World Aviation Congress, Phoenix, AZ (November 5 – 7).

- Sherry, L., Polson, P., Feary, M. and Palmer, E. (2002) When Does the MCDU Interface Work Well? International Conference on Human-Computer Interaction in Aeronautics, Cambridge, MA (October 22-24).

- Sherry, L., Feary, M, Polson, P., Mumaw, R., and Palmer, E. (2001) A Cognitive Engineering Analysis of the Vertical Navigation (VNAV) Function, NASA/TM -2001-210915, Ames Research Center, Moffett Field, California.

- Javaux, D., Sherry, L., and Feary, M. (1999) How a Cognitive Tutor Can Improve Pilot Knowledge of Mode Transitions. In proceedings of the Digital Avionics Systems Conference (DASC), St. Louis, MO

- Lance Sherry, Steve Quarry, Stephan Romahn, Thomas Prevôt, Barry Crane, Michael Feary, Rosa Oseguera-Lohr, Dave Williams, Everett Palmer. (1999) Closing the Controller/Pilot Loop in the CNS/ATM TRACON: FMS Features to Support CTAS in the TRACON. Honeywell Publication C69-5370-011.

- Sherry, L., Polson, P., Feary, M., and Palmer, E. (1997).Analysis of the Behavior of a Modern Autopilot. Honeywell Publication C69-5370-009.

- Palmer, E., Cashion, P., Crane, B., Green, S., Feary, M., Goka, T., Johnson, N., Sanford, B., and Smith, N.(1997). Field Evaluation of Flight Deck Procedures for Flying CTAS Descents. In proceedings of the Ninth International Symposium on Aviation Psychology, Columbus, OH (April 27-May 1).