Advanced Controls and Displays

Advanced Controls and Displays (ACD) research provided quantitative assessments of both human and system performance in virtual environments (VE) and advanced display systems. Human operators and systems were measured in order to design environments to fit human performance specifications.

- Types of displays studied include haptic displays, virtual acoustic (3-D audio) displays, head-mounted displays (both immersive and see-through), and situation awareness displays.

- Application areas include terminal area air traffic control, teleoperation, manufacturing, and manipulative tasks.

- Areas of expertise include visual, auditory and proprioceptic performance, quantification of system performance, intelligent displays, acoustic modeling, and virtual controls.

Spatial Audio Displays/Virtual Acoustic Environments

Human Systems Integration Division researchers sought to enhance the intelligibility of multiple communication channels normally heard with one-ear headsets and reduce operator fatigue.

The ACD lab was able to develop inexpensive audio technology using spatial auditory display techniques and two-channel headsets. Each communication channel was processed to sound at a different location, enabling our everyday binaural intelligibility advantage. This technology was designed to be easily retrofitted into existing systems and could be customized for individual listeners via EPROM cards.

This spatial auditory technology created up to a 6 dB improvement in speech intelligibility compared to one-ear headsets. Listener fatigue was reduced, thereby enhancing safety. Operation of individual volume control was minimized for hands-free operation. A U.S. patent has been granted for this research, allowing for technology transfer to other domains.

Visual Displays/Augmented Reality Interfaces

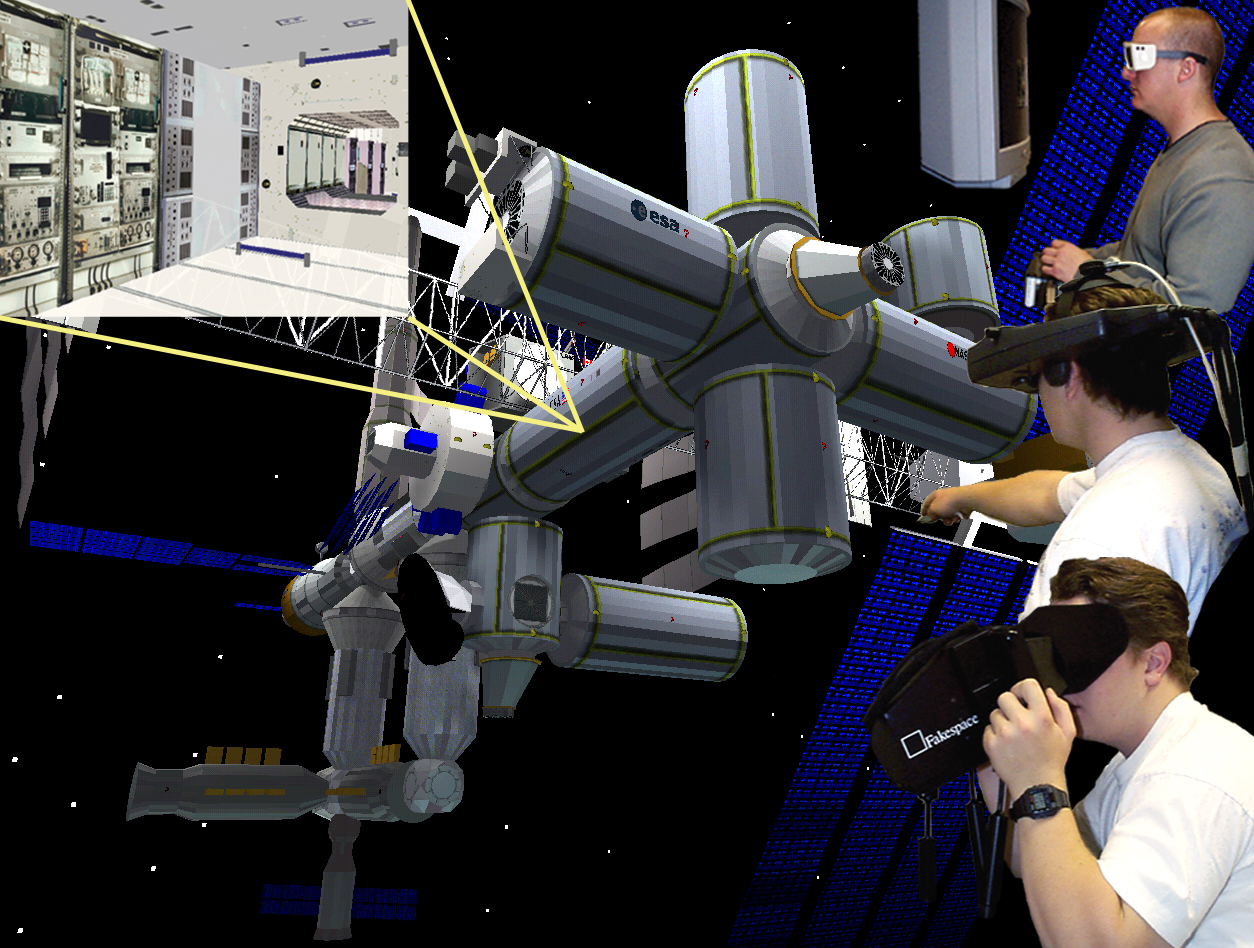

Virtual environment simulation historically suffered from low visual and low dynamic fidelity because of the high cost of high-fidelity hardware and software. Researchers in the Advanced Controls and Displays lab worked to:

- Improve the dynamic fidelity of the virtual environment system, with the goal of accurately rendering in depth virtual objects that could naturally designate aircraft positions as seen from a control tower

- Develop and test improved techniques for the dynamic registration of superimposed computer-generated imagery in an application

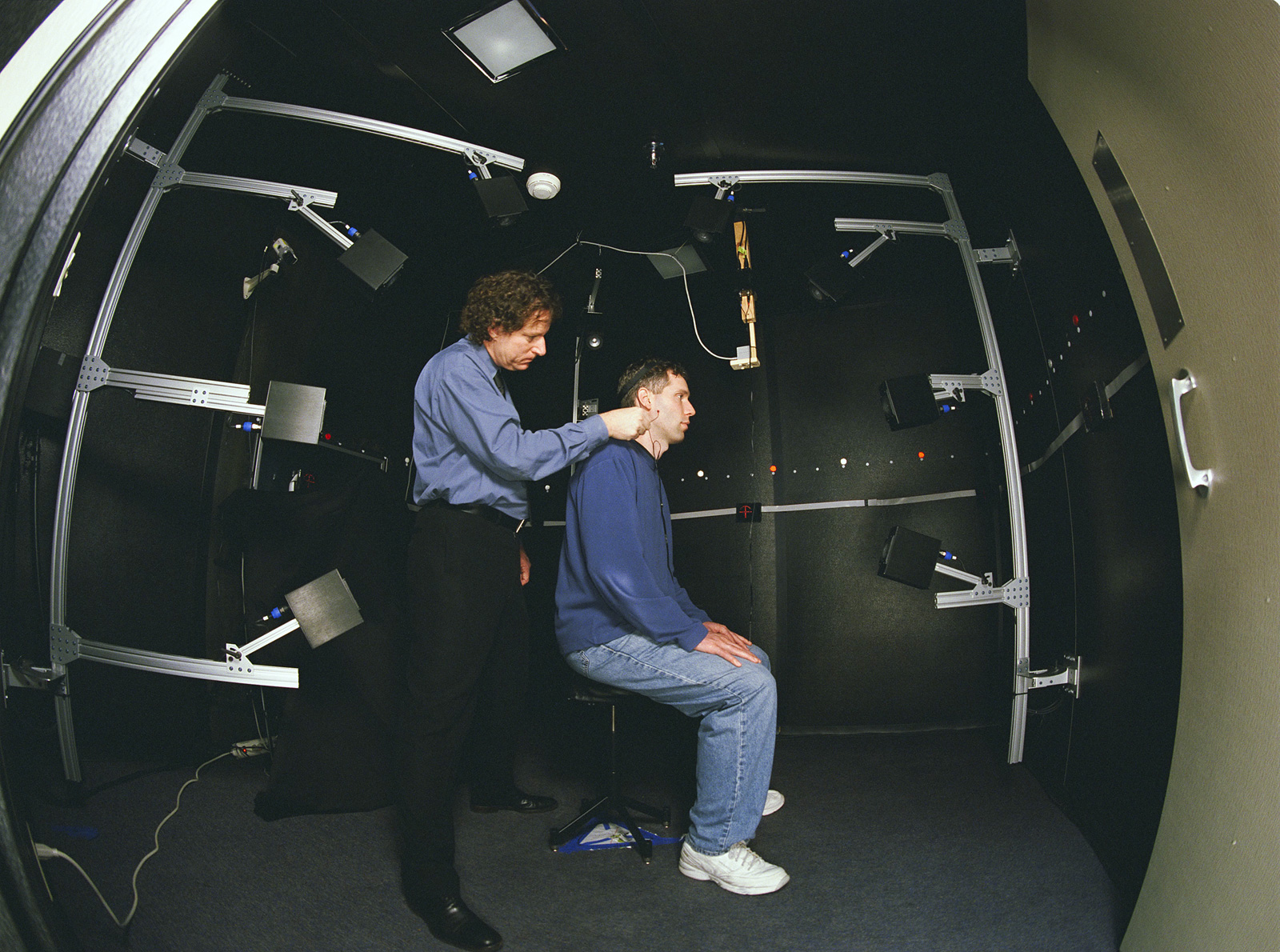

High dynamic fidelity was needed for perceptual stability of the virtual objects that are the aircraft symbols, a feature needed for the user to sense that the objects can be seen stably through the tower windows. The required dynamic fidelity was determined through psychophysical testing and part-task simulation of several specific tasks for which the virtual environment or augmented environment system (head-mounted optical overlay of computer graphics) was likely to be used.

Predictive filter techniques for improving the dynamic registration of augmented reality displays were then designed, developed, tested, and evaluated.

Augmented reality display research resulted in reduced workload and improved spatial situation awareness for air traffic controllers in the control tower. Visual flight rules could then be extended into new conditions through the electronically enabled ability to see through fog and occluding obstacles.

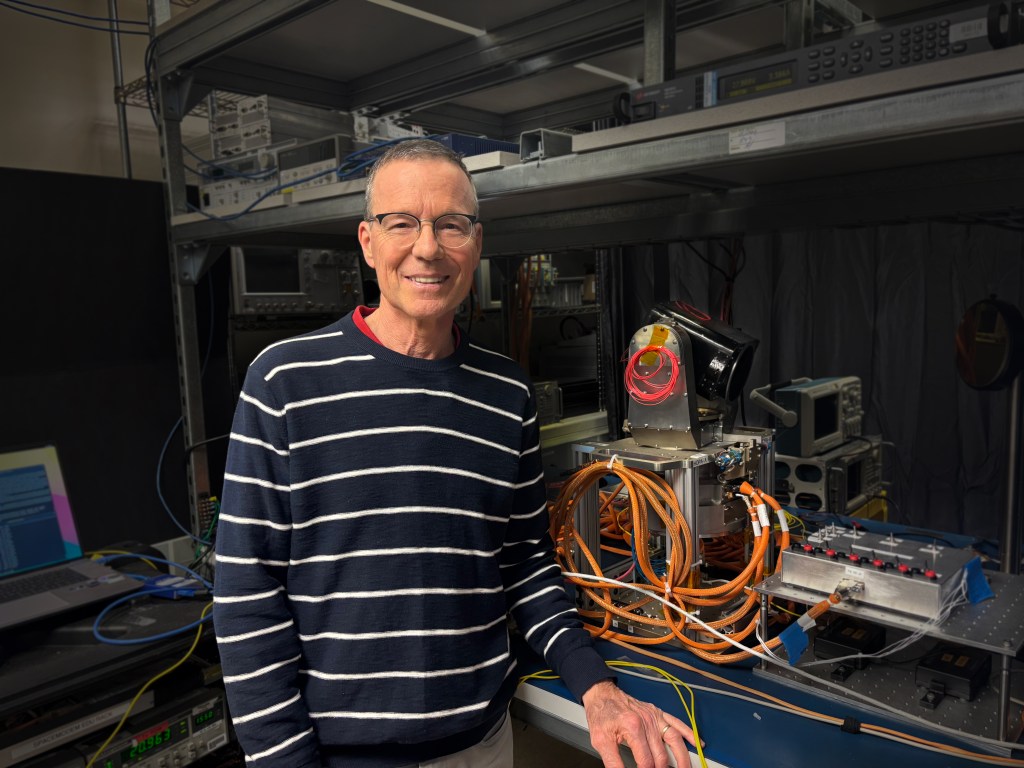

Haptic Virtual Interfaces

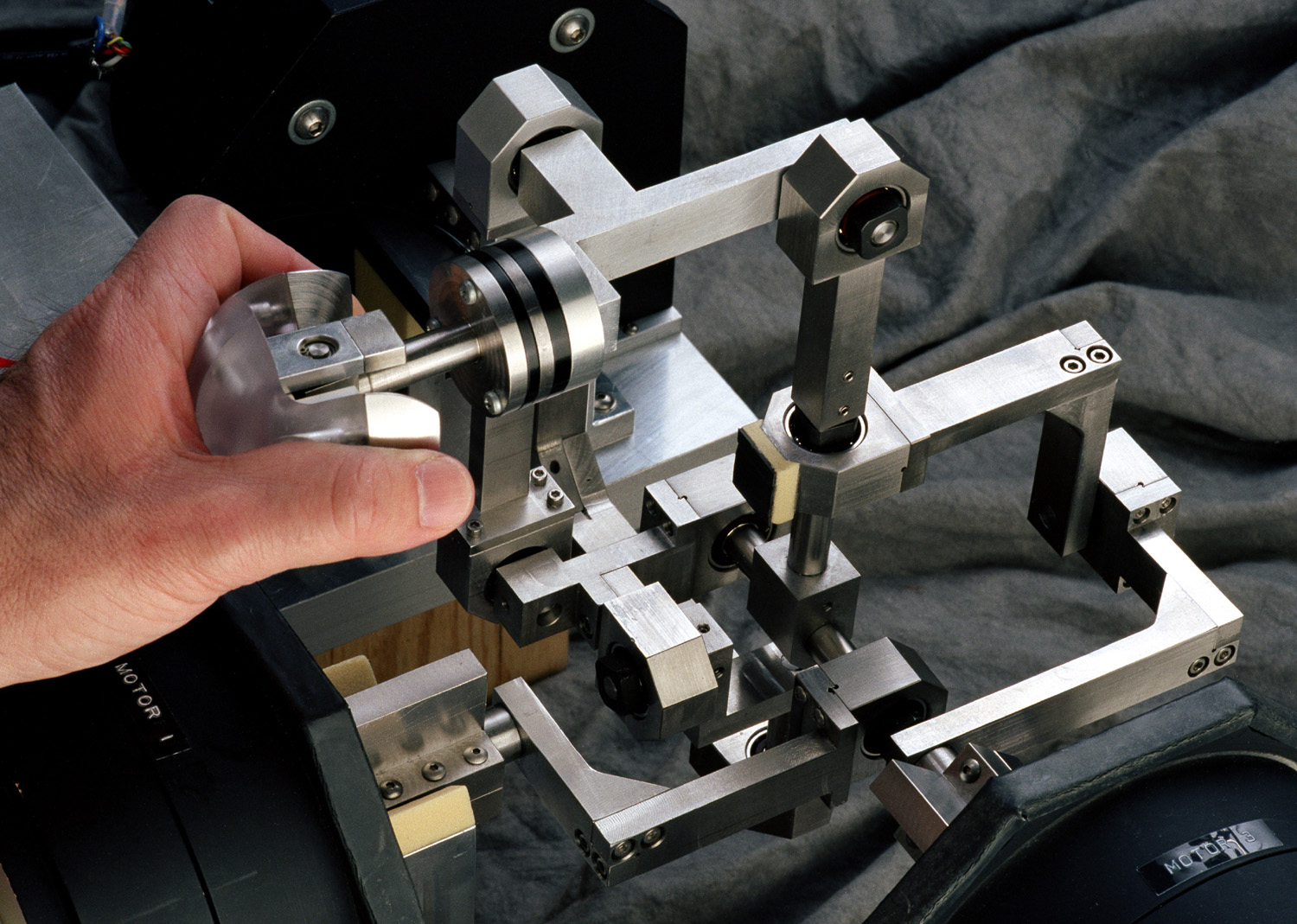

In order to help NASA determine human factors guidelines for effective haptic (force reflecting) manual interfaces for multisensory virtual simulator and teleoperation displays, ACD researchers developed and tested complex haptic virtual interfaces.

The two major program aspects included:

- The design and implementation of a novel, very high performance three degree of freedom (dof) force reflecting manual interface for use with our laboratory’s virtual visual display as a research testbed

- Examination of human perception and manual task performance respectively, through psychophysical discrimination and manual target acquisition

High-fidelity virtual environment and virtual object simulations using tuned predictive filters have allowed presentation of perceptually stable virtual objects, enabling testing of new visual-manual phenomena and measurement of the simulation fidelity requirements for several levels of manipulative precision.

Patent awarded for the three degree of freedom parallel mechanical linkage.

Virtual Environments/Visual Simulations

One goal of the Advanced Controls and Displays Lab was to improve the performance of real-time visual simulation for aerospace systems.

In multiple studies, we incorporated models of human vision capabilities into graphical rendering architecture to improve apparent visual simulation performance with reduced system load. We exploited the limitations of visual sensitivities to intelligently cull aspects of the scene that didn’t need to be rendered.

Ultimately, several effective graphical rendering techniques were developed, including mixed-resolution stereo displays and spatially phase-shifted imagery. We were able to reduce the computational cost required to achieve a desired level of image quality and frame rate.

Perspective Visual Cues for Vehicular Control

ACD researchers developed models to describe the human operator performing a manual control task (such as docking control) using a perspective scene. Examples of perspective scenes include out-the window viewing, camera images, and simulator imagery.

We combine models of manual control with simplified models of perspective scene viewing and visual cue selection to determine the most effective visual cues for a particular task. These models can be directly validated and refined through simple experimental measurements.

Perspective scene content is a design variable, whether through choice of window arrangements and pilot eyepoint, actual or simulated scene markings, imaging system characteristics, or symbolic display augmentation. Perspective scene content is typically designed by trial and error, a process that is costly and only considers a small subset of potential options. The technique we developed provides an analytical tool to develop, test, and validate perspective scene designs at the initial stages of a design process.

Virtual Acoustic Environments

ACD researchers developed enabling technologies for increased situational awareness and speech intelligibility in advanced acoustic displays.

We developed methods for synthesizing spatial sound from measured acoustical features of humans and the working environment. We also evaluated task performance, intelligibility, and localization using advanced technologies for acoustic displays in aerospace applications. Our research enabled new technological advancements in the development of human-centered information displays.

Basic research enabling worldwide technological development. Aerospace applications research for collision avoidance systems, virtual reality for training, enhanced communications, and situational awareness.

Noteworthy Publications

Adelstein, B.D., Gayme, D.F., Ho, P., Kazerooni, H. (1998). Three Degree of Freedom Haptic Interface for Precision Manipulation, Proceedings, Dynamic Systems and Control, DSC-Vol. 64, American Society of Mechanical Engineers, New York, 1998, p. 185.

Kaiser, M.K., Remington, R. (1988). Spatial Cognition, In T. Tanner, Y. A. Clearwater, & M. M. Cohen (Eds.) Space Station Human Factors Research Review, Volume IV. NASA Conference Publication #2426

Ellis, S.R., Kaiser, M.K. (1989). Spatial Displays and Spatial Instruments, NASA CP10032, Ames Research Center, Moffett Field

Wenzel, E.M. (1990). Three-Dimensional Virtual Acoustic Displays, NASA TM103835

Wenzel, E.M. (1992). Localization in Synthetic Acoustic Environments, Presence, 1, 80-107

Ellis, S.R. (1994). What are Virtual Environments?, IEEE: Computer Graphics & Applications, 14:1, 17-22, 1994.

Begault, D.R. (1994), 3-D Sound for Virtual Reality and Multimedia, Cambridge, MA: Academic Press Professional

Begault, D.R., Erbe, T. (1994). Multichannel Spatial Auditory Display for Speech Communications, Journal of the Audio Engineering Society, 42, 819-826

Ellis, S.R. (1995). The Design of Pictorial Instruments. In Human Computer Interaction and Virtual Environments, NASA CP 3320, NASA Langley Research Center, pp. 1324.

Adelstein, B.D., Kaiser, M.K. Beutter, B.R., McCann, R.S., Anderson, M.R. (2013). Display Strobing: An Effective Countermeasure Against Visual Blur from Whole-Body Vibration, Acta Austronatica, 92, 53-64

* Please note, this webpage is not actively maintained and is for historical reference only.