A conversation with Kai Goebel, Tech Area Lead for Discovery and Systems Health at NASA’s Ames Research Center in Silicon Valley.

Transcript

Matthew C. Buffington (Host): You are listening to the NASA in Silicon Valley, episode 20. Today, we are chatting with Kai Goebel, the Tech Area Lead for Discovery and Systems Health. We talk about NASA technology and how it drives exploration. Particularly, how computer science and engineering work together to solve complicated problems in harsh conditions. We cover his work in creating health systems for aeronautics and how that led him into working on autonomous robotics. Here is Kai Goebel.

[Music]

Host: What brought you to Silicon Valley? What brought you to NASA? How did you show up?

Kai Goebel: Well, it’s actually family. My wife was born in the area. And I worked for GE, a corporate research in upstate New York. And we spent there almost 10 years. And after 10 years, my wife said, “Enough. That’s it with the winters.”

Host: I’m done.

Kai Goebel: “Find yourself a job back on the West Coast.”

Host: My wife, we had this similar conversation, where it was like, “We’re done with humidity and done with winter. Let’s go to the land of perfect weather.”

Kai Goebel: Exactly.

Host: In fact, I think we make several comments and references in the podcast about how awesome the weather is here.

Kai Goebel: Yeah.

Host: It’s a common theme. So were you always interested in robotics or NASA-type stuff when you were a kid? How did you end up even going to school? Tell us about that.

Kai Goebel: Well, I’ve always been interested in technology and tinkering. And I’ve grown more and more fond of it as I’ve gotten older. And the thing that really interested me was to figure out, when do things break? Or how do they break. And I made a career of that. So that’s how I got into the field of diagnostics, which is really how do you determine that something does work. And later prognostics. That’s what I’ve been doing here really, is to determine at what time will it break.

Host: Oh, really? Okay.

Kai Goebel: And that, it turns out is a super interesting characteristic to have in autonomy also, where you want to know at any given time, how am I doing? Right? So that is an area that I’ve been very involved with here at Ames for the last 10 years.

Host: So is that like an engineering background?

Kai Goebel: I have an engineering background. Yeah, I’m a mechanical engineer. My first job, I got hired as a computer scientist, so that was a little awkward.

Host: Yeah. Relevant, but still.

Kai Goebel: Yeah. Well, it turns out that as a mechanical engineer, you have a lot to teach. And you are really rooted in what is possible. And for a company like GE, that was quite relevant.

Host: The research. Yeah.

Kai Goebel: It is also quite relevant for NASA. So I think nowadays there really isn’t sort of the pure computer scientist or the pure engineer anyway.

Host: You always have to know a little bit of both.

Kai Goebel: Yeah, you know have to know a lot of.

Host: A little — oh, a lot of — that’s cute.

Kai Goebel: Well, and more. Right? It’s beyond just engineering. You have to also be in the social sciences and all that kind of stuff, which we don’t get taught typically in engineering school. But that is important, too, is to really understand how do you put in social values into engineering systems.

Host: Okay, interesting. Cool. So then you were at GE in New York. You came out here to the Bay Area to start working at NASA Ames. Did you just see an advertisement? Was there some connection? Or you just went in cold? How did that actually happen?

Kai Goebel: Well, you let your network know that —

Host: Nice.

Kai Goebel: — that there’s an interest in moving out. And that’s essentially how it happened.

Host: And then how long have you been here?

Kai Goebel: Ten years almost. Yeah.

Host: About 10 years.

Kai Goebel: Actually a little more than 10 years now.

Host: When you first came over, did you start off doing more computer science-y kind of stuff? Or was it straight in with the engineers, and you arrived in and started tinkering and building things? How’d that work?

Kai Goebel: Here at Ames?

Host: Yeah.

Kai Goebel: Yeah, that was beautiful. So I show up, and I ask my boss, “So, what do you want me to do?” And he asks, “Well, what do you want to do?”

Host: Yes.

Kai Goebel: And I thought, “Wow, this is going to be great.” So we ended up building a lab, which centered around this prognostic capability, which, at that time, was really ground-breaking. And we hired 10 people and really had a lot of fun.

Host: Start building a team and pulling people together —

Kai Goebel: Yeah, yeah.

Host: — start making stuff.

Kai Goebel: Building a lab, showing people what we could do. And that was really awesome.

Host: Wow. So were there any big projects that people would recognize or something? I’m guessing it was probably during the shuttle period or something? Or was there some science.

Kai Goebel: Yes, the Shuttle was still around. Most of our funding really came from aeronautics —

Host: Oh, wow. Okay.

Kai Goebel: — which allows enhanced… but mostly to do more foundational kind of work as opposed to the really applied work. And since this was really a new capability, a new technology, a lot had to be built up from the ground. And aeronautics, that was the area that allowed us to do that.

Host: So within aeronautics is like doing more research? Or others do the research and then you figure out, okay, let’s see if we can make this idea work? Is that you’re doing on the engineering side?

Kai Goebel: No, it was really the research —

Host: Or both?

Kai Goebel: — in aeronautics. So there was a project back then called the Integrated Vehicle Health Management project. And it dealt with how do you determine the wellbeing of a vehicle, in this case, an aircraft. So you want to know, is your engine functioning? And if not —

Host: The “check engine” light comes on.

Kai Goebel: — you want to know that. Yeah. Or, right.

Host: On the airplane, right?

Kai Goebel: Well, sort of like that.

Host: Sort of like that.

Kai Goebel: Or is your landing gear okay?

Host: Okay.

Kai Goebel: Is your fuel pump okay? Is your navigation system doing well?

Host: So you’re building the system that helps —

Kai Goebel: Yeah.

Host: — check the health and safety of your aircraft?

Kai Goebel: Precisely. And there was a lot of interest in that. And we had a lot of fun in really examining a lot of these aspects. We built a lab. And in that lab, we try to break stuff. So we were aging it. We were beating on it. We were heating it. We zapped it.

Host: Do whatever you could.

Kai Goebel: Yeah. And then we designed algorithms. We built models off of the system. We then tried to explain, here’s how it’s working. And then how it’s behaving when it’s under stress. And then we tried to say, okay, if that thing now is under stress, can I then predict at what point —

Host: At what point is it going to break?

Kai Goebel: — it’s going to break. And we used it in the lab. So we ran these algorithms on the various things that we were trying to break in the lab.

Host: And then you figure out why it broke, and how at different points in time —

Kai Goebel: Yeah, yeah. So the exciting thing is to be able to determine it happens in half an hour. And then in half an hour, it actually pops.

Host: Right, okay.

Kai Goebel: So that’s super exciting. Now, for a more practical example, we applied that to batteries on a UAV, on an uninhabited air vehicle. And when you’re flying that and it runs off batteries, you want to know at what point does it run out of batteries. And it seems sort of obvious when you’re walking, whenever —

Host: Yeah, of course, you’re flying it, you want to know when the batteries are going to run out.

Kai Goebel: — yeah, right. But you have a AA battery or something like that, and you know, well, that much capacity, and in 20 minutes it should run out of juice. Turns out that there are a lot of elements in operations that induce variability that are hidden from the operator. And so if you’re flying that thing, and suddenly after 10 minutes, the propeller stops, that’s not a very desired thing to happen.

Host: It’s not a good outcome.

Kai Goebel: And that is precisely what happened with some of our colleagues. And the aircraft came down and had a rough landing, in quotation marks.

Host: Yes, having a bad day.

Kai Goebel: And since it’s NASA, there’s a safety investigation and all that. And so those colleagues came to us and said, “Hey, can you help us out with that prognostic thing?”

Host: To understand.

Kai Goebel: Yeah. So we integrated the algorithms onto the aircraft. And it determines in real time how much time do you have left in the air, and then communicates that down to the pilot.

Host: Oh, wow.

Kai Goebel: And then gives the pilot a two-minute warning and says, okay.

Host: Now’s the time.

Kai Goebel: Because the pilot said, “Okay, I need about two minutes to turn around, come back to the landing strip. And if I can’t do that, it gives me time to do another go-around.” And we’ve done 75 flights now.

Host: Wow.

Kai Goebel: And the flight director has so much confidence in the algorithm that he says, “Okay, you can rely on that thing. You don’t have to have that super safety measure anymore.” And it has bought us 50 percent more in the air. And so there’s still some real time that we want to do real science.

Host: Okay.

Kai Goebel: And so one thing I wanted to add is that the variability comes because of maybe headwinds.

Host: I was going to say, yeah, different factors.

Kai Goebel: Exactly. Or you decide that you want to fly a slightly different route or go to different weigh points or linger longer where you are right now. And that means there are different loads on the batteries that you didn’t anticipate, or it might be at a very different temperature. So if you’re flying in winter, it’s one thing. But if you’re flying in 100 degrees —

Host: Yeah. It’s hot and humid and —

Kai Goebel: — it’s hot and —

Host: — things —

Kai Goebel: — yeah.

Host: — things are expanding.

Kai Goebel: Yeah. And in particular, the electrochemistry of a battery behaves very differently —

Host: Really?

Kai Goebel: — with different temperatures. Yeah.

Host: Okay. So now you’re doing a lot of stuff with autonomous vehicles or robots, for that word. So was there always an autonomous factor in some of the tinkering that you were doing? Or at what point in time did you start figuring out, all right, let’s let this thing fly on its own. Or let’s build an algorithm to kind of help it know how to fly or move or whatever.

Kai Goebel: Autonomy is a hot topic.

Host: Yeah, especially nowadays.

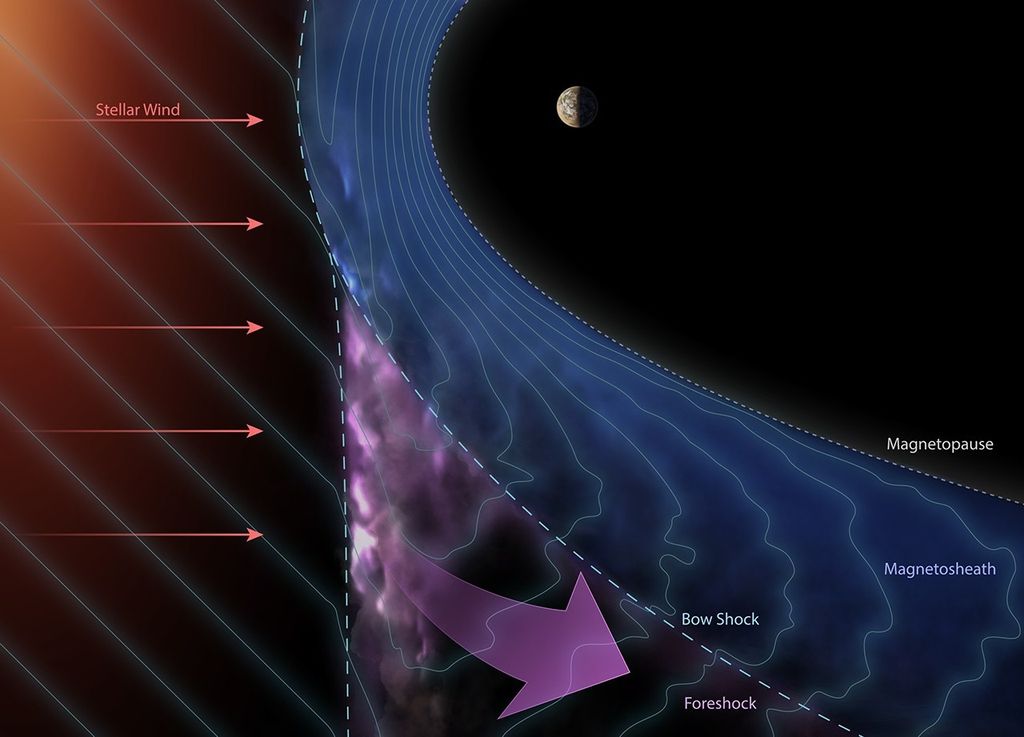

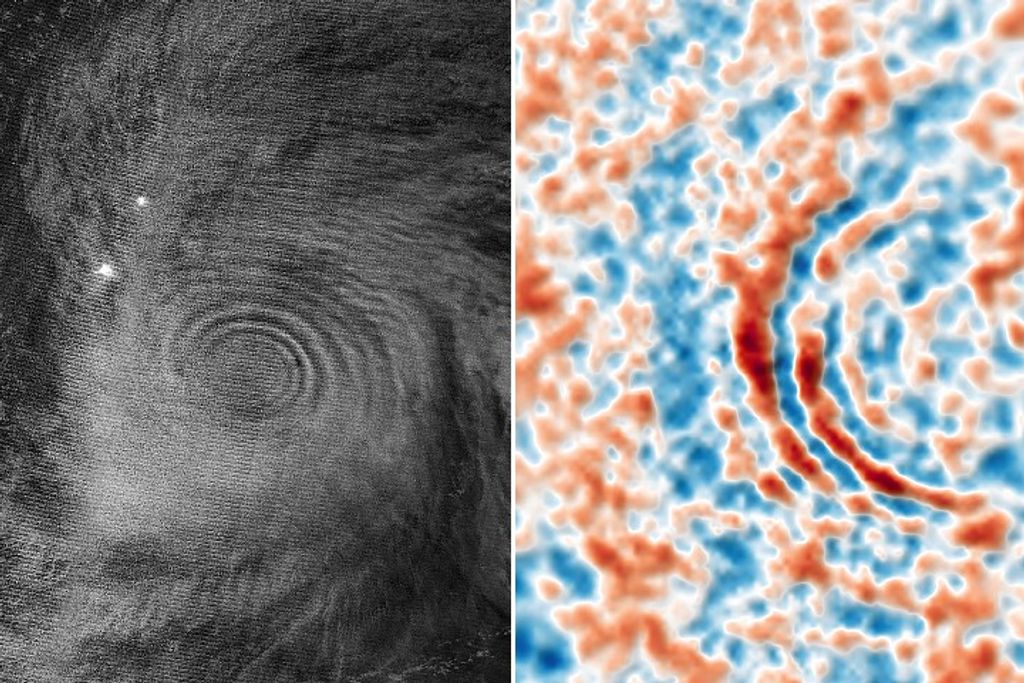

Kai Goebel: Yeah. It has been a hot topic for NASA for a while, because we have these things out there that you have to survive in hostile environments —

Host: Like space.

Kai Goebel: — like space, where it can be very cold or not. If you’re pointing towards the sun, it can be rather —

Host: Yeah, all the radiation.

Kai Goebel: — warm. Yeah, exactly. And so you’re encountering environments that you didn’t think of. And so what are you going to do then? How are you going to react? And the answer is, it depends.

Host: Nice.

Kai Goebel: You have to trade off different objectives. So you have your resources. You want to maybe be careful with those and maximize those, maximize use of those. You want to perform some science. You want to get to some destination. You want to be mindful of safety. And so you have all these criteria, and you have to trade those off. So if you use more resources, well, maybe you can do less science.

Host: You have to give or take. You have to figure out what’s your priorities.

Kai Goebel: Yeah. And that’s where autonomy comes into play. So it sort of figures that out. Most of the traditional operations at NASA have been ground-based. Like Apollo, you call home Houston.

Host: Have a problem.

Kai Goebel: And then they say, “Okay, wait a second. We’ll figure it out, and then we’re going to tell you what to do.” Now, if you’re at Mars, if you’re at the far point, you have a 45-minute communication delay or something like that.

Host: Oh, wow.

Kai Goebel: Right? So if you call Houston, “We’ve got a problem,” right?

Host: Stop. Watch.

Kai Goebel: Then you wait 45 minutes, and the answer comes, “Come again? I didn’t hear you clearly.” And so obviously that paradigm —

Host: It complicates things.

Kai Goebel: — is not going to work really well. And so we need to move some of the decision-making onto the vehicle or onto whatever we have, the habitat or something like that. And for certain events, the decisions need to be made really quickly. And so that needs to happen on-site.

And when there are no humans, for example, if you’re putting some equipment on Mars in advance of a mission, that stuff still needs to be maintained. And so it needs to figure out that something is happening and needs to react to that in some form or fashion. And so that kind of functionality needs to be built into those systems, into the vehicle or into whatever asset you have, the habitat. And ideally that can be done through autonomy.

Host: Okay. And you talked about using autonomous and kind of putting values or judgments in that. That’s basically what you are. You’re teaching the robot to fish. And so it can kind of solve those problems on its own, but you have to determine in advance, all right, these are our priorities. If this, then do that. If this, do this. You’ve got to figure all that stuff out in our own heads to then make that algorithm and to build the robot to know what the priorities are.

Kai Goebel: Right. Right. You give the robot some overarching goal and say, “Hey, survive.”

Host: That’s a good goal to have.

Kai Goebel: And then the robot figures out, well, to survive, I need to eat, say, if it were a robot that has to eat. And then the robot can decide how to get food. And it could go fishing, but maybe it’s going trapping, or maybe it’s going farming or something like that. And that really depends on the environment and on the context and what’s happening right now around it. And what we found is that if you — this is speaking more abstractly now —

Host: Yeah, okay.

Kai Goebel: — if you are trying to pre-compile every single decision, then your system becomes super rigid and will not react in an optimal fashion, because you tell it to fish while it’s standing in a field. And so that’s not the right thing to do. And if you allow the robot or the thing to do the right thing, it figures out that I shouldn’t be fishing here. I need to go to the river. And I think that is sort of the essence of autonomy, is to allow some freedom of doing the right thing, of self-government, if you will. And that’s, of course, very tricky to code up.

Host: Yeah, I was going to say.

Kai Goebel: But it also has a lot of promise in that it has more chances to survive.

Host: So it’s a lot more than just if this happens, then you need to do this, in this scenario, act this way. It’s just like it’s teaching it to think. Well, or teaching it to adapt and be more mobile or more agile than just giving it a list of rules to follow.

Kai Goebel: Exactly.

Host: It’s letting it understand its flexibility and take inputs and figure it out.

Kai Goebel: Exactly. And there are a lot of challenges with that. So how, for example, do you prove that this thing is going to be guaranteed to make the right decision?

Host: And so do you do also a lot of work — we talked about autonomy and how it’s really hot right now. Of course, autonomous cars, you think of other autonomous vehicles or things. Are you doing a lot of work with that? And kind of how that’s all growing, both within NASA and in the motor vehicle world.

Kai Goebel: Sure, sure. Autonomous vehicles for us are rovers.

Host: Nice.

Kai Goebel: And, yes, we are working with those. The particular interest that I have is to assess again the health of the vehicle and what that means for autonomy. So the asset needs to make a determination of, how am I doing? How am I feeling today? Are my batteries charged? Are my tires fully pumped up? And all that. So how far can I go? How fast can I go? Can I make the really hard turns?

The environment also plays a huge role. So am I going to go over rough terrain? Or is it smooth? And so that all has to be factored in, and then it has to factor in, are my motors in good shape, or are they worn down? If the front right one is worn a little bit more, does it mean I have to work the left one a little harder? Because —

Host: To compensate.

Kai Goebel: — I can’t have imbalance, yeah. And so that is really an important aspect for autonomy.

Host: And that’s almost a call back to some of your earlier work of airplanes and figuring out the health of the system.

Kai Goebel: That’s right. That’s right.

Host: And now you’re continuing with that. Was that on purpose? Has this just been a theme in your career? It just happens to be how it’s turned out?

Kai Goebel: Yeah, I don’t think that autonomy was something that I set out to do. I think that sort of came along and was an opportunity to get engaged with. Autonomous aircraft, of course, are super hot, too. You have all those drones and all that.

And that’s where we, in fact, got engaged first with those prognostic algorithms. With the rovers, we have been trying out some of the decision-making algorithms. So those are really algorithms that take information about the health and then figure out, what am I going to do now? And that is super exciting, because you have a plethora of possible solutions.

Host: Wow.

Kai Goebel: Most of them are crappy.

Host: Yes.

Kai Goebel: So you don’t want to do that.

Host: You have to find the best bad decision?

Kai Goebel: Right. Well, and then there are so-so decisions. And then there are the good decisions. But the problem is there’s more than one good decision. As soon as you have more than one objective, you have possibly a large number of optimal solutions. Now, you’re still going to pick one. But on top of that, to generate all these possible solutions takes possibly a long time. And you may not have a long time. So to speed up these algorithms and to come up with a solution very quickly is one of the research themes that we have been engaging in.

Host: So what’s kind of the hot thing that you’re working on now? What are you waking up in the morning, and what’s keeping you up at night? Like you’re showing up to work, and you’re like, “I’ve got to figure this thing out.”

Kai Goebel: Yeah, I don’t think there’s just one thing. And that’s the beauty of the work that we’re doing, and of engineering really, is that a lot of things come together. You really have to keep moving the needle on a number of different technologies to make them come together in the end to really have a product that really provides the biggest value.

Host: Because they almost kind of build on each other, and then probably opens up new possibilities and categories as you move along.

Kai Goebel: That’s right. That’s right. So being able to reliably predict when something is happening is a continuing research theme for us. Being able to deal with the uncertainty is a big topic. So, for example, if I make a prediction that something will be happening in three hours plus-minus three hours, then that prediction isn’t really worth much.

Host: Yeah, exactly.

Kai Goebel: And so to somehow be able to encapsulate the uncertainty properly and rigorously and systematically and deal with that so that you get a smaller uncertainty window without just waving your hand is really important. And so for that, you need to know, what are your different uncertainty sources? So certainly you get your sensor noise.

But your models that you’re building to make all these estimations and predictions, they’re not really super accurate, either. And the model’s made up of model structure and model parameters. Then your current assessment is imprecise. Then your understanding of what the future will bring, there’s a large amount of uncertainty. And to really account for all of that properly and to stack that up is a non-trivial task.

Host: Well, awesome. This is fascinating stuff. And it’s really interesting to see the convergence of the research and the engineering, just figuring out how things work. So for anybody who’s listening who has any more questions or anything for Kai, we’re using the #NASA Silicon Valley, and we’re on Twitter @NasaAmes. This is awesome. Thanks for coming on over.

Kai Goebel: Well, thanks for having me.

[End]

.png?w=1024)