Fluid-Lensing based Machine Learning for Augmenting Earth Science Coral Datasets

Abstract

Authors: Alan Li, Ved Chirayath Laboratory for Advanced Sensing, NASA Ames Research Center.

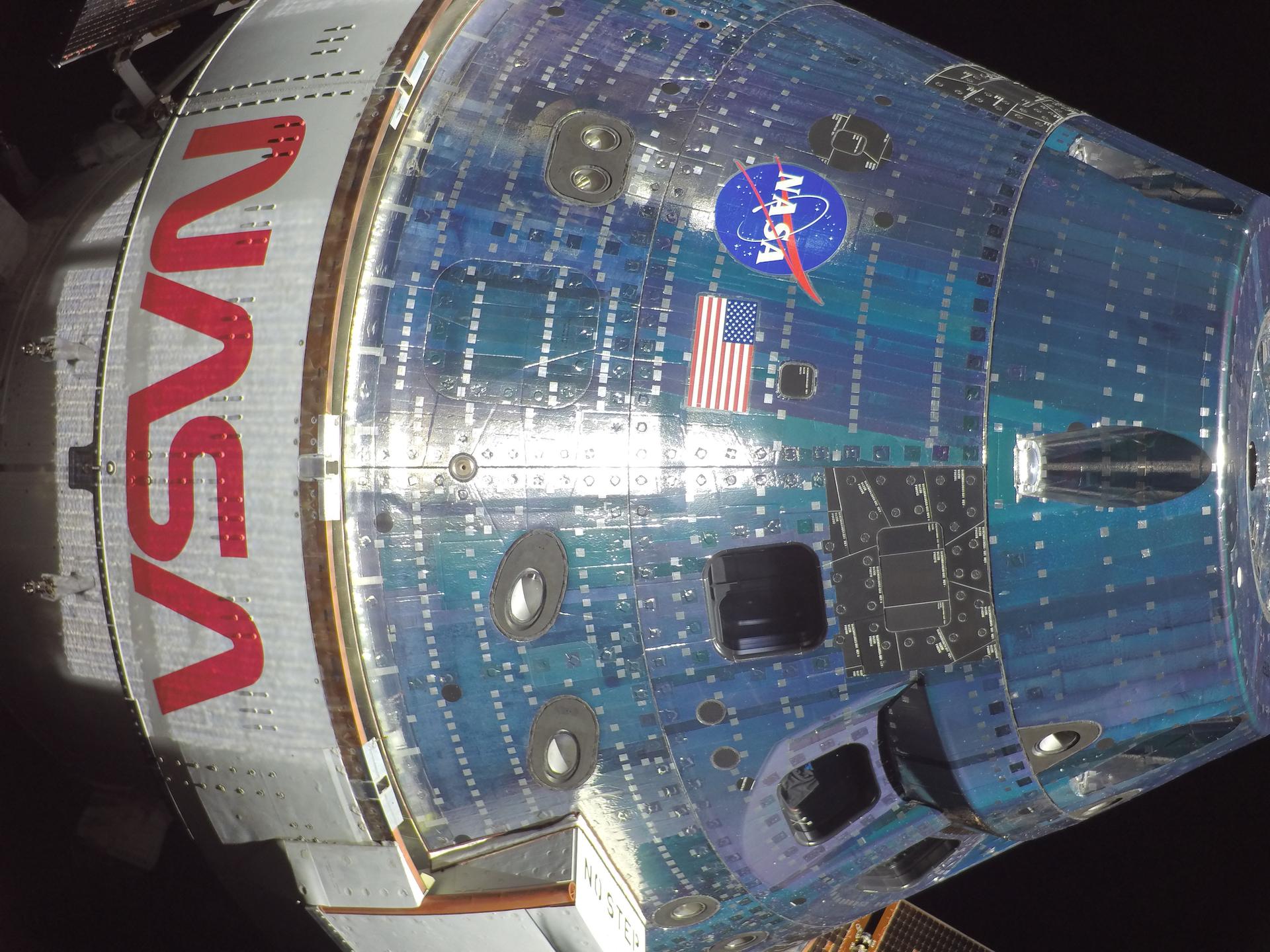

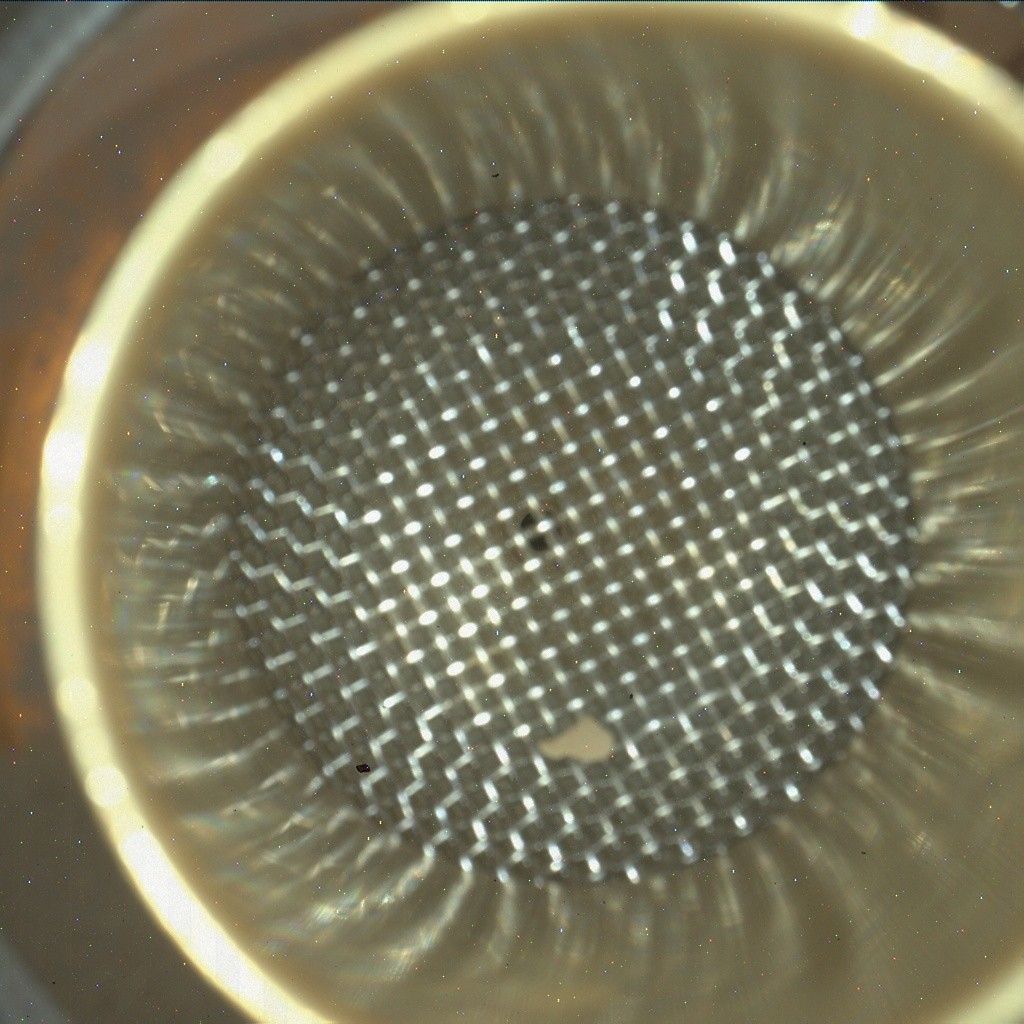

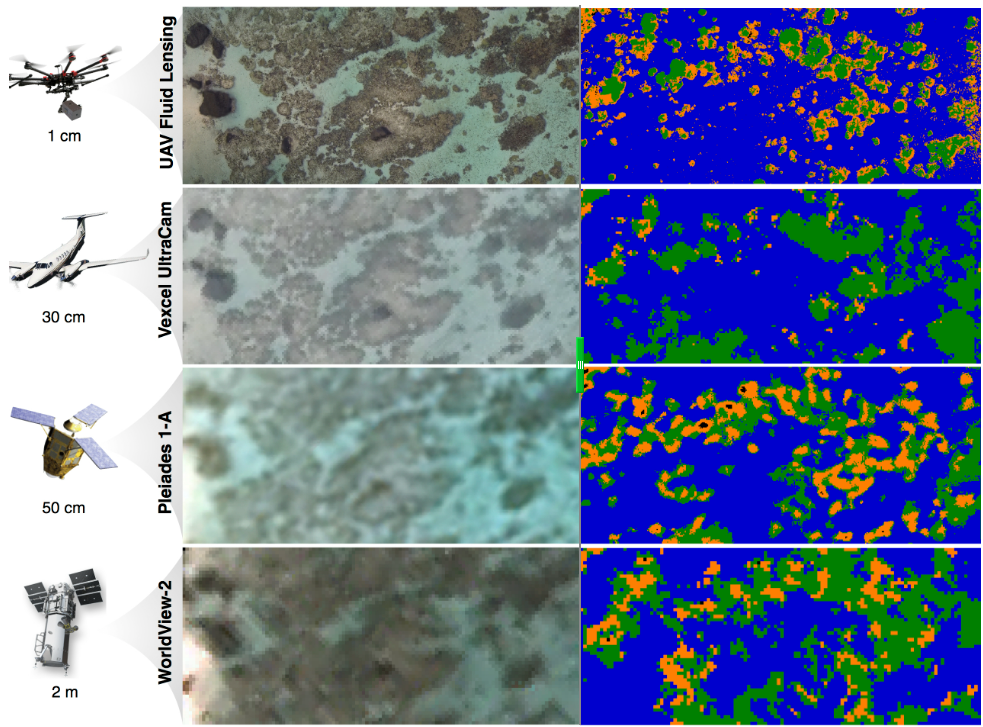

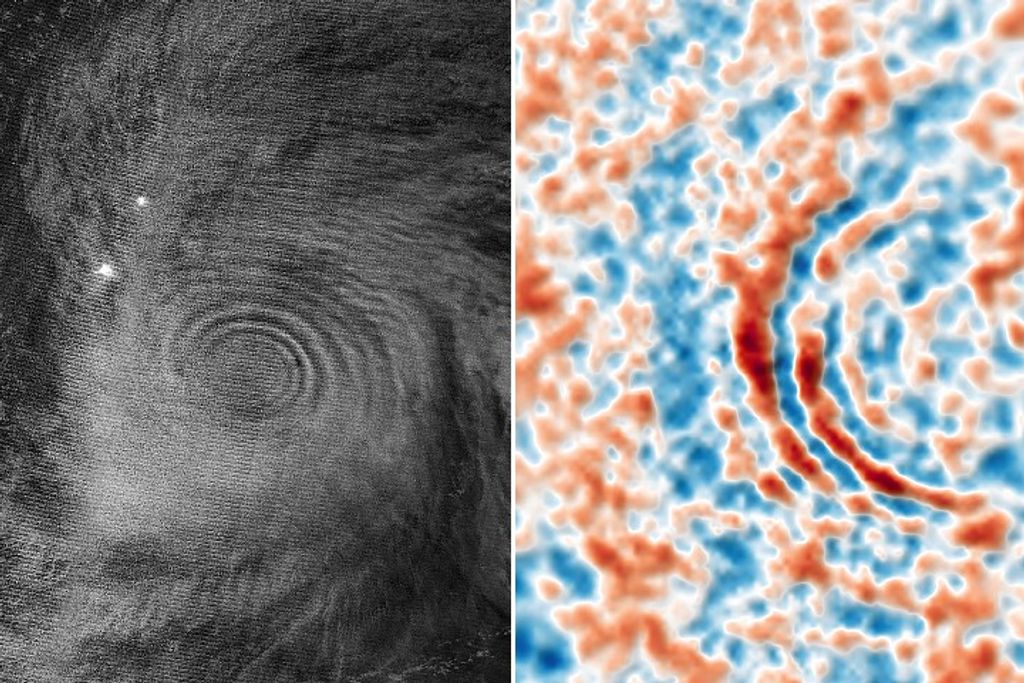

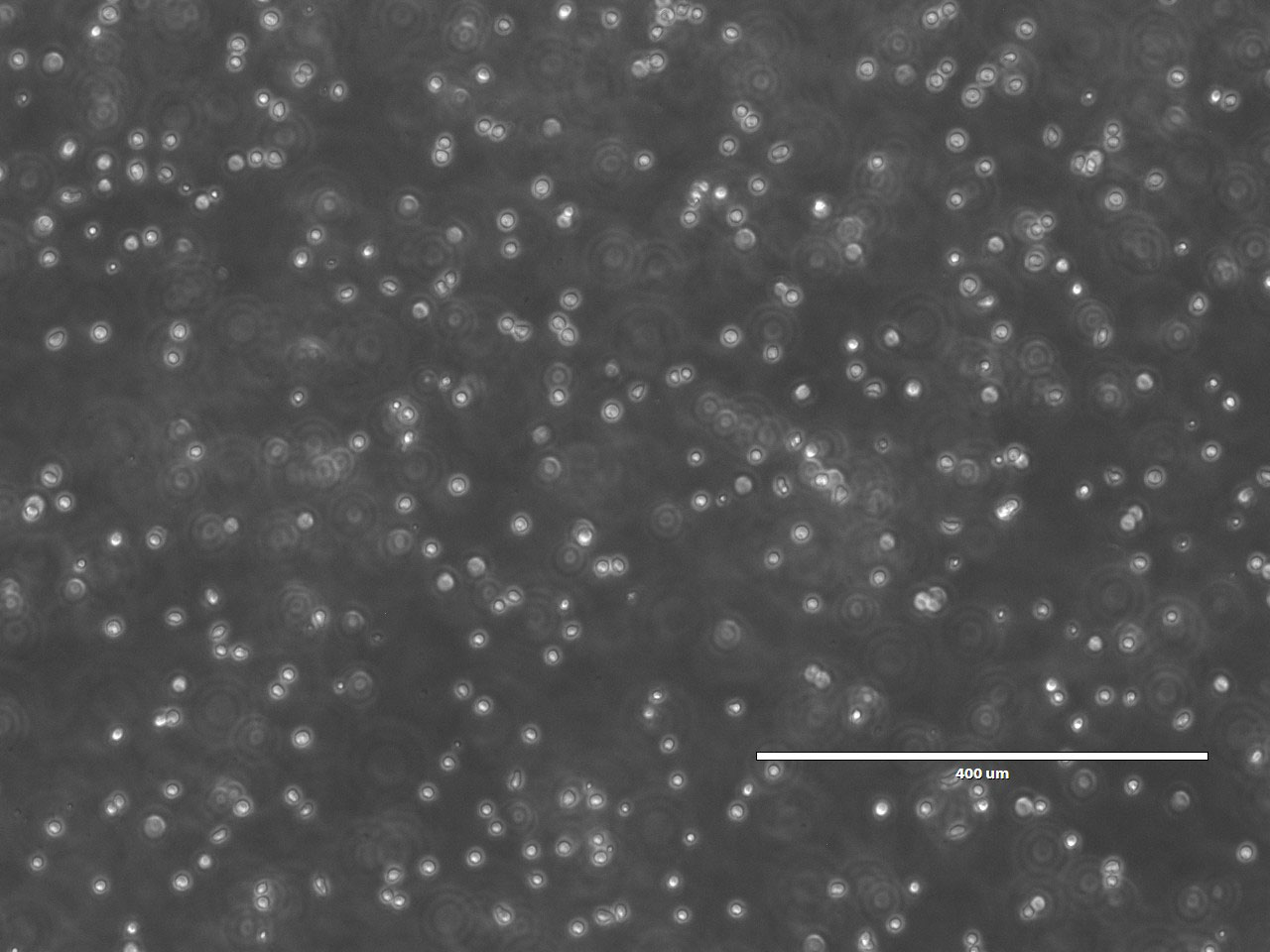

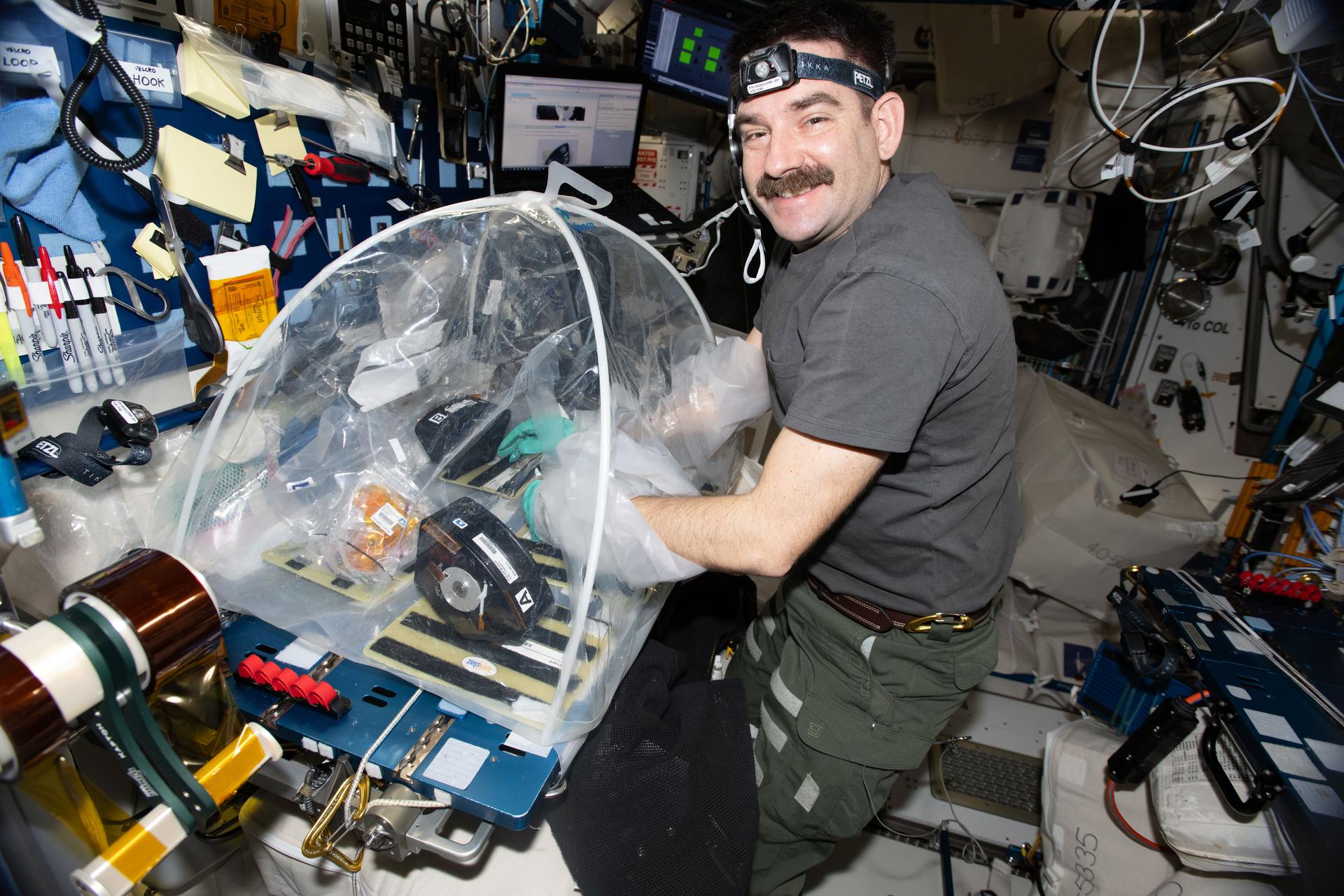

Recently, there has been increased interest in monitoring the effects of climate change upon the world’s marine ecosystems, particularly coral reefs. These delicate ecosystems are especially threatened due to their sensitivity to ocean warming and acidification, leading to unprecedented levels of coral bleaching and die-off in recent years. However, current global aquatic remote sensing datasets are unable to quantify changes in marine ecosystems at spatial and temporal scales relevant to their growth. We at LAS are directly addressing this challenge through recent UAV imaging campaigns directed towards coral reefs, utilizing a new algorithm known as Fluid Lensing capable of removing optical distortions caused by the fluid surface to produce cm-scale resolution imagery of benthic cover. Taking these data products, we were able to generate highly detailed and accurate maps of coral cover and morphology breakdown utilizing MAP estimation, with error rates of 5% and 8% , respectively.

In this project, we employ supervised machine learning to augment existing datasets from NASA’s Earth Observing System (EOS), using high resolution airborne imagery. Classification of benthic cover is separated into two phases: (1) discrimination by coral cover (organic vs. inorganic), and (2) discrimination by morphology (sand, rock, branching coral, or mounding coral). The method is based upon Principal Component Analysis (PCA) to remap and rescale existing datasets upon a known Support Vector Machine (SVM) solution within analogous principal spaces.This supervised method is able to autonomously compensate for changing water depth and illumination conditions, with errors for coral cover and morphology classification derived from aerial imagery at approximately 16% and 31%, respectively. Classification error for data derived from the highest resolution commercial satellite imagery available (Pleiades-1A) is approximately 21% for coral cover and 38% for morphology. Although classification accuracy is improved across both phases, morphology discrimination suffers more acutely from lower resolution and noise effects. However, the method shows promise for future work where UAVs may observe multispectral or hyperspectral data, further increasing the speed and accuracy of classification and enhancing datasets taken at higher altitudes.

Classification of Coral Cover

Classification of Coral Morphology

To test our machine learning script, please download the following .zip file and run TestCoralML.m:

f

Note that there are a few external dependencies required for the script to run:

- Matlab Machine Learning Toolbox

- Matlab Image Processing Toolbox

- VLFeat 0.9.20

- DBSCAN.m

- NASA Coral Transect 1 – Fluid Lensed – Ofu American Samoa.tiff

.png?w=1024)